As a part of the work for Cloud Analytics that Dave, Bryan, Brendan, and I been doing, we’ve added several USDT probes to Node. As I’ve been doing a lot of the integration work and working with Ryan Dahl to get them into Node itself, I thought that I would document what we’ve added and talk a bit about what information we can get from the probes. Dave has a blog entry that explains how you can use these probes and Cloud Analytics to understand what’s going on with your application.

Currently Node has probes that let us track the following events:

Before we jump too far ahead of ourselves, we should talk a bit about how to enable DTrace USDT probes with node. To get the DTrace probes in node, you can pass the option –with-dtrace to configure. This flag will fail on systems that aren’t SunOS (i.e. Illumos and OpenSolaris). Now, OS X and FreeBSD do have DTrace. You can certainly hack the wscript for node to get the probes compiled into OS X and FreeBSD; however, they have not been tested. On OS X you can get the probes to fire; however, the translator does not currently work on OS X, which limits their power. I have not tested this on FreeBSD 9 which added support for the USDT provider. So, let’s build node with support for DTrace!

$ ./configure --with-dtrace && make && make install

So, now we can go ahead and list all of the probes that DTrace has for node. To do this we need to fire up our newly compiled node and then run the following DTrace command to see all the probes that we have:

rm@devel ~ $ dtrace -l -n node*:::

ID PROVIDER MODULE FUNCTION NAME

4331 node12090 node _ZN4node14dtrace_gc_doneEN2v86GCTypeENS0_15GCCallbackFlagsE gc-done

4332 node12090 node _ZN4node15dtrace_gc_startEN2v86GCTypeENS0_15GCCallbackFlagsE gc-start

4333 node12090 node _ZN4node26DTRACE_HTTP_CLIENT_REQUESTERKN2v89ArgumentsE http-client-request

4334 node12090 node _ZN4node27DTRACE_HTTP_CLIENT_RESPONSEERKN2v89ArgumentsE http-client-response

4335 node12090 node _ZN4node26DTRACE_HTTP_SERVER_REQUESTERKN2v89ArgumentsE http-server-request

4336 node12090 node _ZN4node27DTRACE_HTTP_SERVER_RESPONSEERKN2v89ArgumentsE http-server-response

4337 node12090 node _ZN4node28DTRACE_NET_SERVER_CONNECTIONERKN2v89ArgumentsE net-server-connection

4338 node12090 node _ZN4node22DTRACE_NET_SOCKET_READERKN2v89ArgumentsE net-socket-read

4339 node12090 node _ZN4node23DTRACE_NET_SOCKET_WRITEERKN2v89ArgumentsE net-socket-write

4340 node12090 node _ZN4node21DTRACE_NET_STREAM_ENDERKN2v89ArgumentsE net-stream-end

As we can see there are several probes here, for this entry we’re going to leave out net-stream-end and net-server-connection. If we include the path to the node.d file, we can even view all the arguments of the probe. The node.d file is a translator that is installed into $PREFIX/lib/dtrace. As long as you have the libdir patch (or move the file into /usr/lib/dtrace) then you should be all set and we can run:

rm@devel ~ $ dtrace -L $PREFIX/lib/dtrace -v -n node*:::

ID PROVIDER MODULE FUNCTION NAME

4331 node12090 node _ZN4node14dtrace_gc_doneEN2v86GCTypeENS0_15GCCallbackFlagsE gc-done

Probe Description Attributes

Identifier Names: Private

Data Semantics: Private

Dependency Class: Unknown

Argument Attributes

Identifier Names: Evolving

Data Semantics: Evolving

Dependency Class: ISA

Argument Types

args[0]: int

args[1]: int

4332 node12090 node _ZN4node15dtrace_gc_startEN2v86GCTypeENS0_15GCCallbackFlagsE gc-start

Probe Description Attributes

Identifier Names: Private

Data Semantics: Private

Dependency Class: Unknown

Argument Attributes

Identifier Names: Evolving

Data Semantics: Evolving

Dependency Class: ISA

Argument Types

args[0]: int

args[1]: int

4333 node12090 node _ZN4node26DTRACE_HTTP_CLIENT_REQUESTERKN2v89ArgumentsE http-client-request

Probe Description Attributes

Identifier Names: Private

Data Semantics: Private

Dependency Class: Unknown

Argument Attributes

Identifier Names: Evolving

Data Semantics: Evolving

Dependency Class: ISA

Argument Types

args[0]: node_http_request_t *

args[1]: node_connection_t *

4334 node12090 node _ZN4node27DTRACE_HTTP_CLIENT_RESPONSEERKN2v89ArgumentsE http-client-response

Probe Description Attributes

Identifier Names: Private

Data Semantics: Private

Dependency Class: Unknown

Argument Attributes

Identifier Names: Evolving

Data Semantics: Evolving

Dependency Class: ISA

Argument Types

args[0]: node_connection_t *

4335 node12090 node _ZN4node26DTRACE_HTTP_SERVER_REQUESTERKN2v89ArgumentsE http-server-request

Probe Description Attributes

Identifier Names: Private

Data Semantics: Private

Dependency Class: Unknown

Argument Attributes

Identifier Names: Evolving

Data Semantics: Evolving

Dependency Class: ISA

Argument Types

args[0]: node_http_request_t *

args[1]: node_connection_t *

4336 node12090 node _ZN4node27DTRACE_HTTP_SERVER_RESPONSEERKN2v89ArgumentsE http-server-response

Probe Description Attributes

Identifier Names: Private

Data Semantics: Private

Dependency Class: Unknown

Argument Attributes

Identifier Names: Evolving

Data Semantics: Evolving

Dependency Class: ISA

Argument Types

args[0]: node_connection_t *

4338 node12090 node _ZN4node22DTRACE_NET_SOCKET_READERKN2v89ArgumentsE net-socket-read

Probe Description Attributes

Identifier Names: Private

Data Semantics: Private

Dependency Class: Unknown

Argument Attributes

Identifier Names: Evolving

Data Semantics: Evolving

Dependency Class: ISA

Argument Types

args[0]: node_connection_t *

args[1]: int

4339 node12090 node _ZN4node23DTRACE_NET_SOCKET_WRITEERKN2v89ArgumentsE net-socket-write

Probe Description Attributes

Identifier Names: Private

Data Semantics: Private

Dependency Class: Unknown

Argument Attributes

Identifier Names: Evolving

Data Semantics: Evolving

Dependency Class: ISA

Argument Types

args[0]: node_connection_t *

args[1]: int

For the rest of this entry we’ll go over the different probes and their arguments. I’ll detail what they are and how you can get some useful information from them. In a future entry I’ll discuss how you can use this information.

When an HTTP request comes in it will fire the http-server-request probe. The probe is fired once node receives all of the HTTP headers for the incoming connection. Thus the probe fires right before the HTTP socket emits the event ‘request’. The http-server-request probe has two arguments. The first argument tells us information about the HTTP request. We can use it to get the method and url requested. The second argument tells us information about who is connecting to our HTTP server. We can view all this information with the following DTrace snippet:

# cat -n /tmp/node-httpd-request.d

1 #pragma D option quiet

2

3 node21291:::http-server-request

4 {

5 printf("Connection from %s:%d on fd %d - Request %s: %s\n",

6 args[1]->remoteAddress, args[1]->remotePort, args[1]->fd,

7 args[0]->method, args[0]->url);

8 }

# dtrace -L$PREFIX/lib/dtrace -s node-httpd-request.d

Connection from 10.99.99.254:63486 on fd 7 - Request GET: /

Connection from 10.99.99.254:63487 on fd 7 - Request GET: /

Connection from 10.99.99.254:63488 on fd 7 - Request GET: /

Connection from 10.99.99.254:63489 on fd 7 - Request GET: /

Connection from 10.99.99.254:63490 on fd 7 - Request GET: /

^C

The corresponding probe, http-server-response, will fire once we have finished sending all the data in our response to the client. This probe no longer has the information on the incoming HTTP request, but does have all of the information about who we are talking to. Here’s all the information that we have:

# cat node-http-response.d

1 #pragma D option quiet

2

3 node*:::http-server-response

4 {

5 printf("Connection from %s:%d on fd %d\n",

6 args[0]->remoteAddress, args[0]->remotePort, args[0]->fd);

7 }

# dtrace -L$PREFIX/lib/dtrace -s node-http-response.d

Connection from 127.0.0.1:59149 on fd 8

Connection from 127.0.0.1:39130 on fd 9

Connection from 10.99.99.254:63691 on fd 7

Connection from 10.99.99.254:55004 on fd 19

Connection from 10.99.99.254:55000 on fd 14

Connection from 10.99.99.254:55276 on fd 16

Connection from 10.99.99.254:54977 on fd 11

Now, if we want to track the time that has elapsed, we have to be a little smarter. In node we could have multiple connections going on at the same time, and these are all going to occur in the same thread. This means that we cannot use the traditional thread-local variables that we might do via self->foo. So, how do we actually correlate one http-server-request probe with its http-server-response probe? Each connection is using a separate socket and each socket has its own file descriptor. There is a one to one correlation between sockets and file descriptors. A file descriptor will only be reused if the socket it was previously associated with was closed. So if we wanted to track the latency we can use the file descriptor to correlate events and write a small script that looks something like:

rm@devel ~ $ cat -n/tmp/httpd-lat.d

1 #pragma D option quiet

2

3 node*:::http-server-request

4 {

5 latency[args[1]->fd] = timestamp;

6 }

7

8 node*:::http-server-response

9 {

10 printf("Request took %d nanoseconds to complete\n",

11 timestamp - latency[args[0]->fd]);

12 }

rm@devel ~ $ dtrace -L$PREFIX/lib/dtrace -s /tmp/httpd-lat.d

Request took 444589 nanoseconds to complete

Request took 939339 nanoseconds to complete

Request took 353588 nanoseconds to complete

Request took 1431926 nanoseconds to complete

Request took 334404 nanoseconds to complete

^C

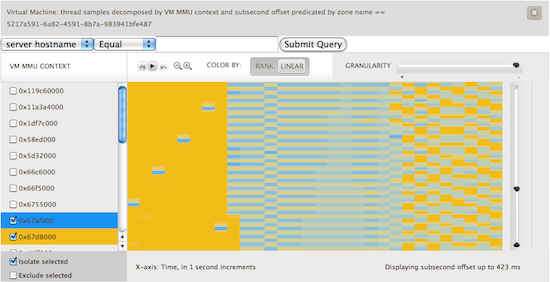

Now, if we run this with the sample Node ‘Hello, world!’ HTTP server we certainly can get some rather interesting data. But the value comes from being able to visualize this data, something that Brendan has looked at extensively.

The HTTP Client probes have been designed to correspond to their HTTP Server counterparts. There are both http-client-request and http-client-response probes. The http-client-request gets fired when an outgoing request is made and the http-client response probe is fired when we have received the response. In terms of arguments, the HTTP Client probes work identically to their server counterparts. The http-client-request has information about what the underlying connection and the HTTP resource we have asked for. The http-client-response probe only has information on the underlying socket.

Let’s look at the same information as we did with the HTTP Server probes, but this time with the client:

# cat -n /tmp/node-httpc-request.d

1 #pragma D option quiet

2

3 node*:::http-client-request

4 {

5 printf("Connection to %s:%d on fd %d - Request %s: %s\n",

6 args[1]->remoteAddress, args[1]->remotePort, args[1]->fd,

7 args[0]->method, args[0]->url);

8 }

# dtrace -L$PREFIX/lib/dtrace -s /tmp/node-httpc-request.d

Connection to 127.0.0.1:23184 on fd 10 - Request GET: /ca/instrumentations/1/value/raw?start_time=1298733995

Connection to 127.0.0.1:23185 on fd 12 - Request GET: /ca/instrumentations/4/value/raw?start_time=1298733995

Connection to 127.0.0.1:23184 on fd 10 - Request GET: /ca/instrumentations/1/value/raw?start_time=1298733997

Connection to 127.0.0.1:23184 on fd 13 - Request GET: /ca/instrumentations/5/value/raw?start_time=1298733997

Connection to 127.0.0.1:23185 on fd 12 - Request GET: /ca/instrumentations/4/value/raw?start_time=1298733997

^C

Again, we can do a similar thing for the response:

# cat -n /tmp/node-httpc-response.d

1 #pragma D option quiet

2

3 node*:::http-client-response

4 {

5 printf("Connection to %s:%d on fd %d\n",

6 args[0]->remoteAddress, args[0]->remotePort, args[0]->fd);

7 }

# dtrace -L$PREFIX/lib/dtrace -s /tmp/node-httpc-response.d

Connection to 127.0.0.1:23185 on fd 15

Connection to 127.0.0.1:23185 on fd 13

Connection to 127.0.0.1:23184 on fd 18

Connection to 127.0.0.1:23184 on fd 12

Connection to 127.0.0.1:23184 on fd 10

^C

With all of this information we can gather a lot of useful information. Because both probes have the file descriptor, we can link them together to get the latency, as we did previously. Because a node program may be making several HTTP requests to external services, having the ability to know which request is inducing how much latency is quite valuable.

This next set of probes is in fact two different probes, one which fires on every read from a socket and one that fires on every write to a socket. In addition to the information about the socket itself, we get two very useful numbers: the size of the data and the amount buffered. Whenever node does a write to a socket, it attempts to write all of it to the kernel at once. However, if the write would block, node stores the data in userland and will write it out a bit later. When this happens, the call to write returns false. If you aren’t careful or checking the return value from write that buffer can grow quite quickly in userland and eat up a lot of memory. Let’s look at how we can use these two probes:

# cat -n socket.d

1 #pragma D option quiet

2

3 node*:::net-socket-read,

4 node*:::net-socket-write

5 {

6 printf("%s:%d -- %d bytes -- %d buffered\n", args[0]->remoteAddress,

7 args[0]->remotePort, arg1, args[0]->bufferSize);

8 }

# dtrace -L$PREFIX/lib/dtrace -s /tmp/socket.d

10.99.99.254:55000 -- 314 bytes -- 0 buffered

10.99.99.254:55000 -- 2740 bytes -- 0 buffered

10.99.99.254:55000 -- 2 bytes -- 0 buffered

10.99.99.254:55000 -- 5 bytes -- 0 buffered

^C

Javascript is a language where you don’t have to explicitly manage memory like you do in C. Instead, the runtime uses various techniques to allocate and later determine what memory can be freed. This technique is known as garbage collection. Node is using v8 under the hood to do this work. v8 gives us the ability to be notified right before garbage collection starts and ends. We have two pieces of information from the garbage collection process: the type of garbage collection and various flags.

To understand the different types, we have to dive into the v8 header file. Node v0.4.0 is currently using v8 version v3.1. If we open up /usr/include/v8.h we see the following for valid garbage collection types:

2472 enum GCType {

2473 kGCTypeScavenge = 1 << 0,

2474 kGCTypeMarkSweepCompact = 1 << 1,

2475 kGCTypeAll = kGCTypeScavenge | kGCTypeMarkSweepCompact

2476 };

From this we can see that v8 supports two different kinds of garbage collection. To help understand the differences, it's important to know that v8 uses the notion of generations. Generational garbage collection makes the observation that objects fall into two camps: those that come and go rather quickly and those that stick around for a while. Mark and Sweep compaction is a traditional form of garbage collection which works well for older objects, but takes much longer. The Scavenge algorithm works well for short lived objects and is generally much quicker than Mark and Sweep. Dave helps explain why we care about GC.

The other argument to our GC probes are a series of flags. Currently this looks like:

2478 enum GCCallbackFlags {

2479 kNoGCCallbackFlags = 0,

2480 kGCCallbackFlagCompacted = 1 << 0

2481 };

When using the probes, there are a few useful things to know. When GC occurs, nothing else is going to run. Furthermore, v8 uses the same thread to call the GC prologue and epilogue handlers. These callbacks are respectively fired immediately before and immediately after the GC operation. Because we have the same thread calling it, we can use a thread local variable in D to keep track of and answer the question of how much latency was due to garbage collection. We can do this with the following D script:

# cat -n /tmp/node-gc.d

1 #pragma D option quiet

2

3 node*:::gc-start

4 {

5 self->ts = timestamp;

6 }

7

8 node*:::gc-done

9 {

10 printf("Spent %d nanoseconds doing Garbage Collection\n",

11 timestamp - self->ts);

12 self->ts = 0

13 }

# dtrace -s /tmp/node-gc.d

Spent 780604 nanoseconds doing Garbage Collection

Spent 50416942 nanoseconds doing Garbage Collection

Spent 33276517 nanoseconds doing Garbage Collection

Spent 14498463 nanoseconds doing Garbage Collection

Spent 3847561 nanoseconds doing Garbage Collection

^C

Those are all the probes that we have currently added to node. All of them are available in the stock node src that you can download and compile yourself. The idea behind all of the probes that we added is to be able to answer a question directly related to what your application is doing and to be able to understand why and where issues are coming from.